Superpixels for enhanced detection of breast cancer

Deep learning methods are increasingly used to aid medical diagnosis. At IMT Atlantique, Pierre-Henri Conze is taking part in this drive to use artificial intelligence algorithms for healthcare by focusing on breast cancer. His work combines superpixels defined on mammograms and deep neural networks to obtain better detection rates for tumor areas, thereby limiting false positives.

In France, one out of eight women will develop breast cancer in their lives. Every year 50,000 new cases are recorded in the country, a figure which has been on the rise for several years. At the same time, the survival rate has also continued to rise. The five-year survival rate after being diagnosed with breast cancer increased from 80% in 1993 to 87% in 2010. These results can be correlated with a rise in awareness campaigns and screening for breast tumors. Nevertheless, large-scale screening programs still have room for improvement. One of the major limitations of this sort of screening is that it results in far too many false positives, meaning patients must come back for additional testing. This sometimes leads to needless treatment with serious consequences: mastectomy, radiotherapy, chemotherapy etc. “Out of 1,000 participants in a screening, 100 are called back, while on average only 5 are actually affected by breast cancer,” explains Pierre-Henri Conze, a researcher in image processing. The work he carries out at IMT Atlantique in collaboration with Mines ParisTech strives to reduce this number of false positives by using new analysis algorithms for breast X-rays.

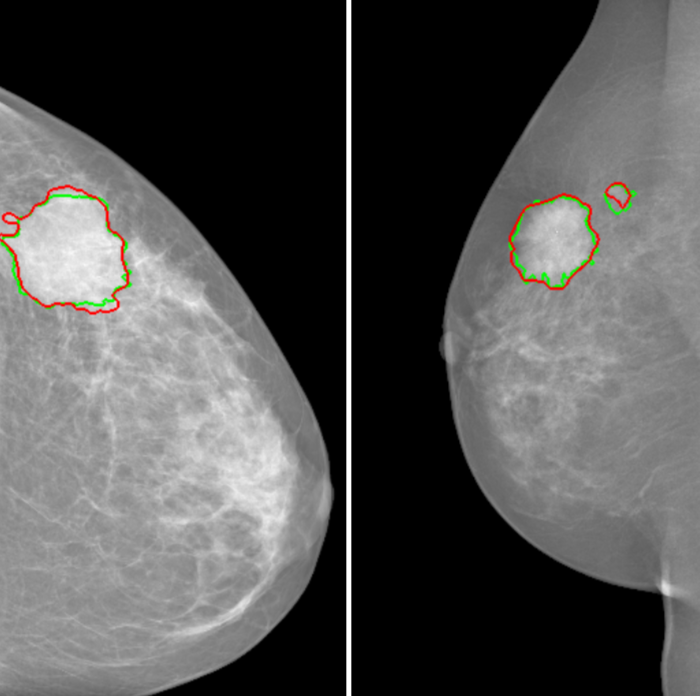

The principle is becoming better-known: artificial intelligence tools are used to automatically identify tumors. Computer-aided detection helps radiologists and doctors by identifying masses, one of the main clinical signs of breast cancer. This improves diagnosis and saves time since multiple readings do not then have to be carried out systematically. But it all comes down to the details: how exactly can the software tools be made effective enough to help doctors? Pierre-Henri Conze sums up the issue: “For each pixel of a mammography, we have to be able to tell the doctor if it belongs to a healthy area or a pathological area, and with what degree of certainty.”

But there is a problem: algorithmic processing of each pixel is time-consuming. Pixels are also subject to interference during capture: this is “noise,” like when a picture is taken at night and certain pixels are whited out. This makes it difficult to determine whether an altered pixel is located in a pathological zone or not. The researcher therefore relies on “superpixels.” These are homogenous areas of the image obtained by grouping together neighboring pixels. “By using superpixels, we limit errors related to the noise in the image, while keeping the areas small enough to limit any possible overlapping between healthy and tumor areas,” explains the researcher.

In order to successfully classify the superpixels, the scientists rely on descriptors: information associated with each superpixel to describe it. “The easiest descriptor to imagine is light intensity,” says Pierre-Henri Conze. To generate this information, he uses a certain type of deep neural network, called a “convolutional” neural network. What is their advantage compared to other neural networks? They determine by themselves which descriptors are the most relevant in order to classify superpixels using public mammography databases. Combining superpixels with convolutional neural networks produces especially successful results. “For forms as irregular as tumor masses, this combination strives to identify the boundaries of tumors more effectively than with traditional techniques based on machine learning,” says the researcher.

This research is in line with work by the SePEMeD joint laboratory between IMT Atlantique, the LaTIM laboratory, and the Medecom company, whose focus areas include improving medical data mining. It builds directly on research carried out on recognizing tumors in the liver. “With breast tumors, it was a bit more complicated though, because there are two X-rays per breast, taken at different angles and the body is distorted in each view,” points out Pierre-Henri Conze. One of the challenges was to correlate the two images while accounting for distortions related to the exam. Now the researcher plans to continue his research by adding a new level of complexity: variation over time. His goal is to be able to identify the appearance of masses by comparing different exams performed on the same patient several months apart. The challenge is still the same: to detect malignant tumors as early as possible in order to further improve survival rates for breast cancer patients.

Also read on I’MTech

Leave a Reply

Want to join the discussion?Feel free to contribute!